Monday, 19 September 2022 by Ontrack Team

In this article, we investigate what may be causing your hard drive clicking sound and provide some practical fixes you can try yourself.

Friday, 9 September 2022 by Ontrack Team

A ransomware attack is one of the biggest threats facing online users. In this article, we explore what happens during a ransomware attack, and the steps you need to take to secure your organisation in the aftermath.

Monday, 29 August 2022 by Tilly Holland

Disaster recovery plans and business continuity plans should be an essential part of every organisation. In this blog, we give you six critical points that you should consider when putting together your plans.

Wednesday, 24 August 2022 by Tom Nevin

In this article, we take a look at the computing world’s arch nemesis – the ‘blue screen of death’. But is it as bad as you think? Here we share how the BSOD can actually help you get back online quicker.

Monday, 18 July 2022 by Ontrack Team

Hardly a day goes by without a corporate IT system or a privately owned computer being infected by ransomware. Every time the result is same: the victims are blackmailed with high monetary demands. The problem is so acute that reputable news media reports have intensified in recent weeks.

Tuesday, 28 June 2022 by Ontrack Team

Data Recovery Disaster recovery plans. Critical for every organization. In this blog, we detail cyberattacks, ransomware, natural disasters, and much more.

Wednesday, 1 June 2022 by Ontrack Team

Our tape services team provides clients with peace of mind when accessing legacy data on tapes and virtual backup environments. Get in touch to discuss how Ontrack can help get your legacy data under control.

Wednesday, 1 June 2022 by Ontrack Team

What to do when you have deleted files from a Desktop, Laptop or External/Portable Hard Drive.

Saturday, 28 May 2022 by Tom Nevin

Wanting to wipe data from your old smartphone? In the blog we explain how best to achieve this.

Thursday, 7 April 2022 by Ontrack Team

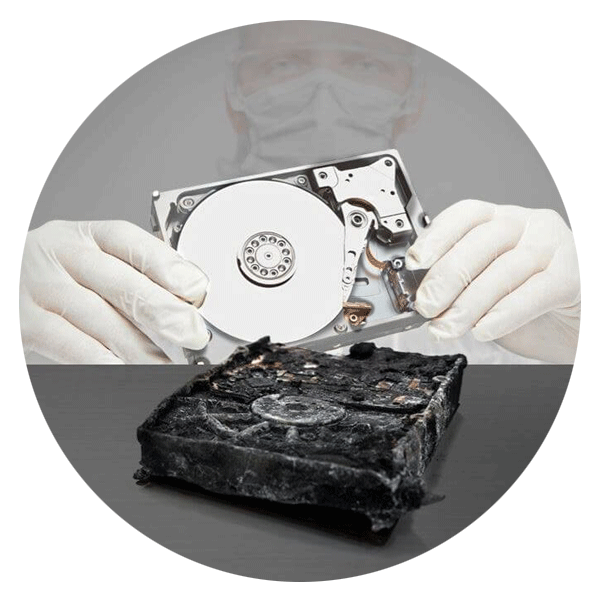

So what is a corrupted hard drive (HDD) and how do you recover your data? Is it a good idea to use data recovery software? Where can you look for help? We answer these common questions in this blog.

Thursday, 31 March 2022 by Ontrack Team

Ontrack is the global leader in server, RAID, ransomware and mobile data recovery services and data recovery software solutions since 1987.

Monday, 28 March 2022 by Ontrack Team

At Ontrack, our experienced team of engineers will always do what they can to recover your required data. There are a few factors which affect our chances of recovering data from a device, and therefore how much data we’re able to get back for you.

Thursday, 24 February 2022 by Michael Nuncic

To help you decide on your next purchase of spare storage or additional storage for a corporate data centre, this study looks at the three key features that distinguish an enterprise-class SSD from a client-class SSD: Performance, reliability, and endurance.

Friday, 7 January 2022 by Ontrack Team

The lifespans of hard drives can vary between devices, but they will all fail at some point. Learn more about how you can identify if your hard drive is about to fail.

Tuesday, 4 January 2022 by Ontrack Team

With victims that range from extensive government agencies to unsuspecting individuals browsing the internet, ransomware can wreak havoc that not only harms your digital device, but your bank account as well.

Saturday, 1 January 2022 by Ontrack Team

Ontrack’s team has been hard at work creating the latest version of the OPC product suite with the goal of providing a better user experience for customers.

Monday, 20 December 2021 by Ontrack Team

Need help figuring out what’s causing your hard drive to fail? Take a look at our rundown of the most common hard drive error codes and how to fix them.

Thursday, 18 November 2021 by Mikey Anderson

In this episode of Storage Board, we’ll take a look at RAID data storage and find out how it works.

Wednesday, 17 November 2021 by Ontrack Team

A multinational client found themselves in desperate need of emergency RAID 5 recovery after noticing that their company’s crucial financial data and office files had disappeared from headquarters.

Thursday, 4 November 2021 by Ontrack Team

Even modern servers and storage systems are running RAID technology - mostly in enterprises, but it has become more prevalent in consumer NAS systems as well. RAID has survived for more than 30 years, and it still plays a major role in data storage to this day. Why is that? Glad you asked.

Call for Immediate Assistance!

- Cloud (5)

- Crypto Currency (2)

- Data Backup (12)

- Data Erasure (5)

- Data Loss (11)

- Data Protection (8)

- Data Recovery (40)

- Data Recovery Software (7)

- Data Security (9)

- Data Storage (9)

- Deleted Data (4)

- Disaster Recovery (5)

- Encryption (2)

- Expert Articles (2)

- Hard Drive (HDD) (19)

- Laptop/Desktop (8)

- Mobile Device (14)

- NAS (1)

- RAID (5)

- Ransomware & Cyber Incident Response (9)

- Server (1)

- Solid State Drives (SSD) (13)

- Tape (10)

- Virtual Environment (4)